Predictive modeling is the art of using high-power computing to learn from the past and predict the future. Most of us use predictive models in our daily lives, but is not always obvious.

For example, when we use an app to navigate through traffic or watch a movie because a streaming service recommended it, that’s predictive modeling at work. That routing app will pull both historical and real-time data on road conditions to predict the fastest route. Your movie suggestions will be chosen based both on what you’ve enjoyed before and the new-to-you movies enjoyed by thousands of people who share your interests.

Modern predictive modeling cannot replace human judgement, but can however make it easier to choose a good option in a landscape cluttered with an endless stream of content and choices.

Learn more about freight analytics tools available from DAT iQ.

But in order for these predictive models to work, they have to learn from a set of known truths. In other words, they need to know what’s happened in the past. The routing app needs to know, at a minimum, if traffic on the road you’re driving is getting steadily worse or improving. It would help if the app also knew what patterns the traffic follows on weekday mornings versus weekend afternoons. That streaming service you use to watch your favorite shows and movies will struggle to identify recommendations you enjoy unless it has access to your viewing history.

For a predictive model to know how the world works, it has to learn from how the world has worked before. It needs history for training. The further back that history goes – with more detail and more accuracy – the better the model will be at predicting how the world will work in the future. At a human level, people who have a lot of experience are likely to have a better understanding of their area of expertise than someone brand-new, because they’ve seen how things play out.

Not all data is equal

We have to be careful with what learning material we give the predictive model. Not all data is useful, and not all correlations are meaningful. You know that person who wears a “lucky shirt” to each of his team’s games? Models also have a weakness for lucky shirts, especially when the model doesn’t have enough reliable history on which to base its predictions. Without enough high-quality data, forecasts become brittle to unexpected events or new information.

In the forecasting business, this is called “overfitting,” when a model is given many ways to explain anomalous events without having to really learn what the underlying relationships are.

For example, a baseball model may “learn” that a pitcher is killer against left-handed batters with names that start with a T. While that may have been true so far, it’s not particularly useful for predicting the outcome of the next at-bat. An overfit model will do a great job of describing the past, and a bad job of predicting the future. So when we investigate new information to include in a predictive model, we need to be sure that this new information is both reliable and meaningful.

Predictive models in trucking

In the trucking world, spot rates are important not just for businesses that operate in the spot market, but also as a leading indicator of contract rates. Predicting the movement of spot rates goes a long way toward eliminating uncertainty in the freight industry, so the DAT iQ team set on the task of using the industry’s largest database of spot market transactions to create the most accurate predictive model available in trucking.

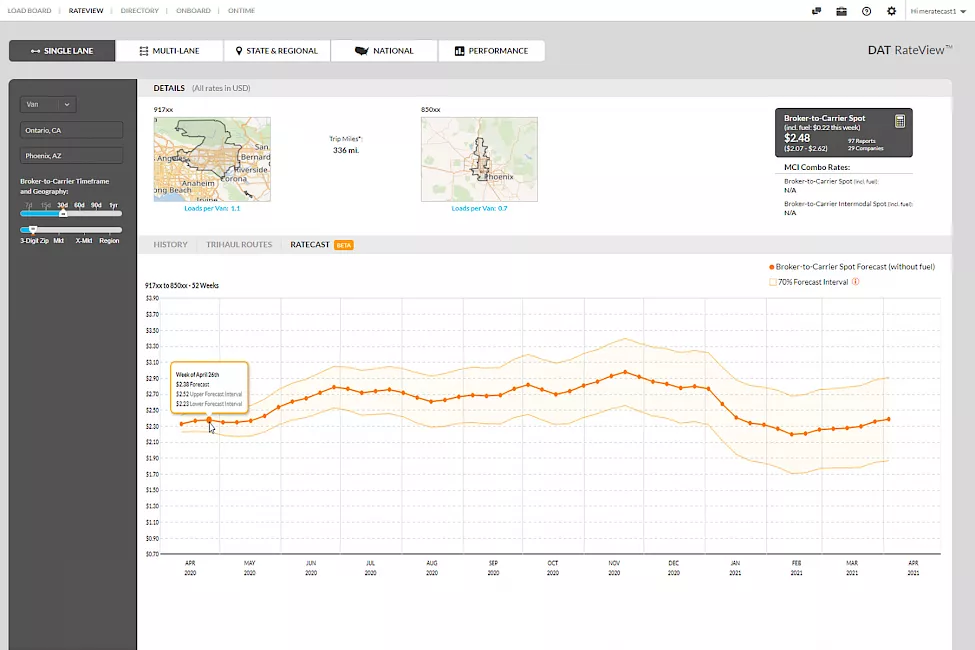

This database is already available to DAT RateView users, providing insights into current market rates and pricing histories on more than [LANE_NUMBER] trucking lanes. But when we dug into the problem of forecasting freight rates, we found that the best model was not actually a single model, but rather a suite of models that we call Ratecast.

Ratecast applies the predictive model that best fits each lane, and every day, Ratecast rebuilds the models in response to the latest data. Again, this doesn’t replace human judgment, but it distills what would be an overwhelming amount of information and presents it to a DAT RateView user in a way that allows them to rapidly and reliably make impactful business decisions.

Ratecast provides both 52-week and 8-day forecasts. The long-term forecasts arm transportation companies with added insights when bidding on RFPs and allow analysts to pinpoint when truckload capacity will be hard to find so that they can plan in advance. The short-term forecasts provide same-day or next-day pricing guidance and allow carriers to strategically position their equipment in anticipation of the next wave of demand.

Expanding these tools

We’re not stopping with these features, however. The DAT iQ team exhaustively tested this first-generation forecasting methodology to ensure that it’s the most reliable possible foundation. But that’s exactly what this is: a foundation.

Going forward, we’ll continue to investigate leading predictors that can add real, reliable signal to our models. We’ll run cutting-edge algorithmic breakthroughs up against our top-performing models to improve accuracy. We’ll expand our ability to provide forecasts for smaller geographies and to address additional pain points. We will continue to deliver new data science and analytics tools to combat uncertainty, helping you to make smarter, more informed decisions.